Click here and press the right key for the next slide (or swipe left)

also ...

Press the left key to go backwards (or swipe right)

Press n to toggle whether notes are shown (or add '?notes' to the url before the #)

Press m or double tap to slide thumbnails (menu)

Press ? at any time to show the keyboard shortcuts

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

How do you know about it? ・ it = this pen ・ it = this joy

perceive indicator, infer its presence

- vs -

perceive it

challenge

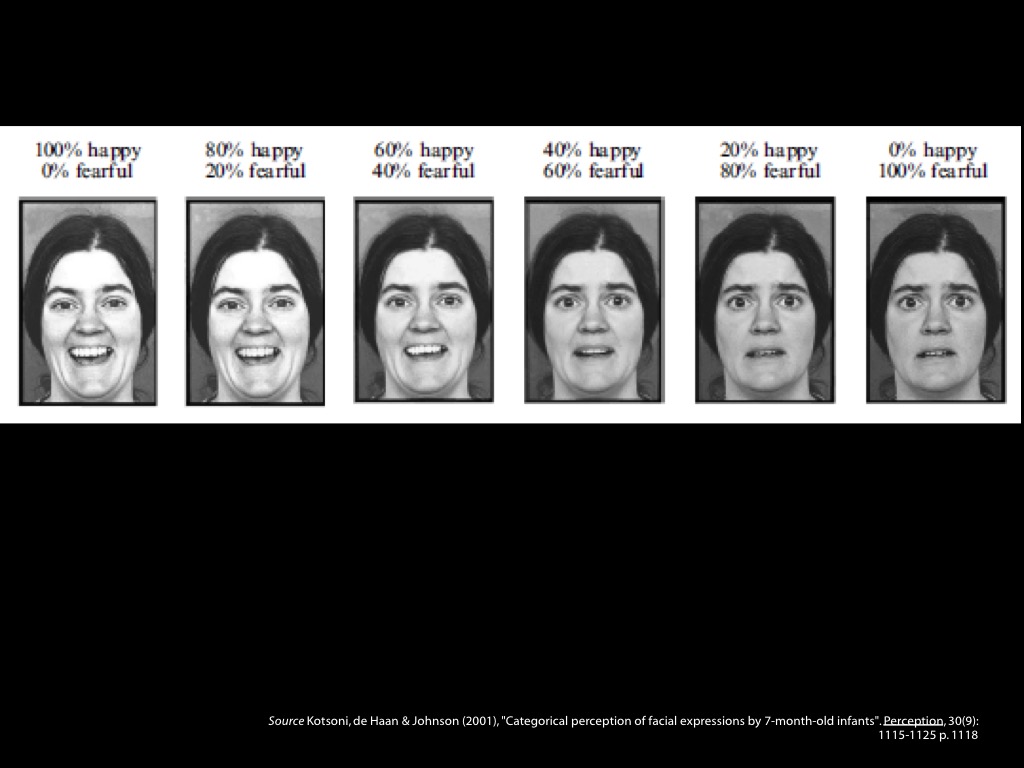

Evidence? Categorical Perception!

informal observation, guesswork (‘intuition’) and elegance

Smith : Red Tomato

‘If someone with normal color vision looks at a tomato in good light, the tomato will appear to have a distinctive property—a property that strawberries and cherries also appear to have, and which we call ‘red’ in English’

(Byrne & Hilbert 2003, p. 4)

It is a ‘subject-determining platitude’ that ‘“red” denotes the property of an object putatively presented in visual experience when that object looks red’

(Jackson 1996, pp. 199–200)

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

challenge

Evidence? Categorical Perception!

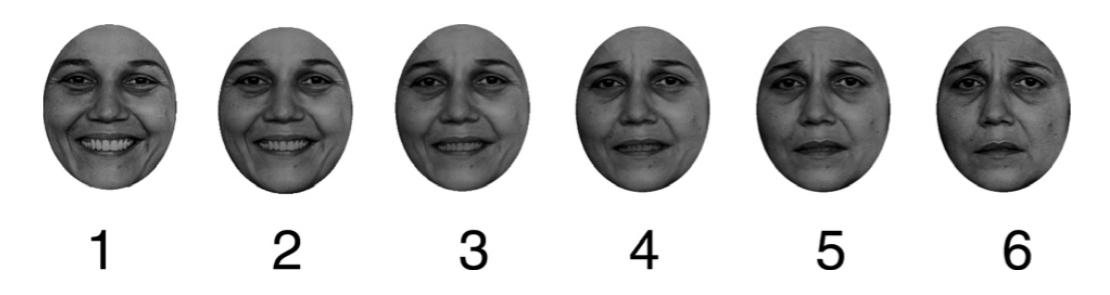

Cheal and Rutherford, 2011 figure 1

Cheal and Rutherford, 2011 figure 4

But could it be merely an effect of unvoiced labelling?

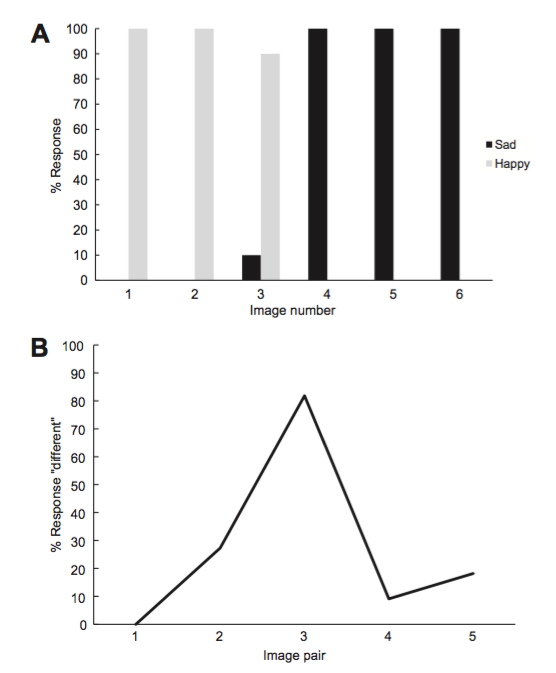

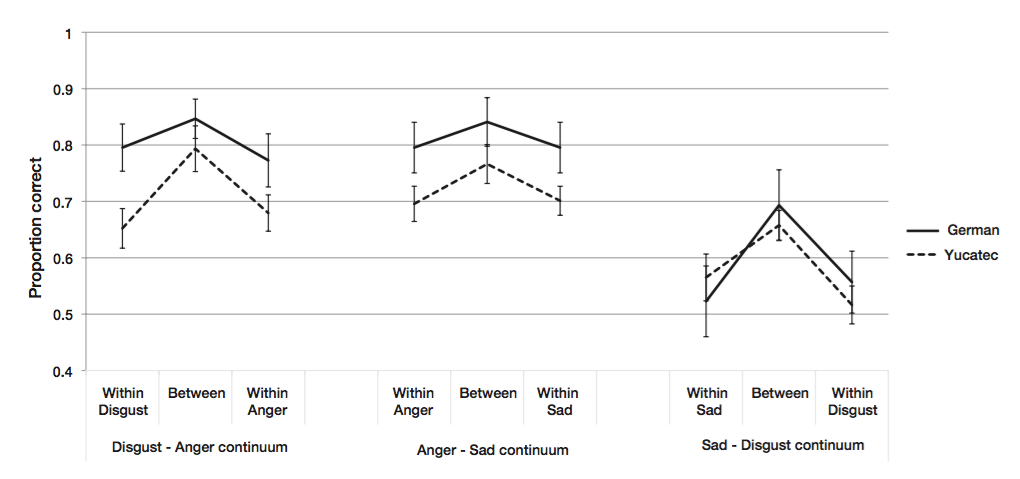

‘facial expressions of emotions are perceived categorically regardless of whether the viewer has lexical categories that distinguish between the perceptual categories.’\citep[p.~1482]{sauter:2011_categorical}

Sauter et al, 2011 p. 1482

Sauter et al, 2011 p. figure 1

Sauter et al, 2011 p. figure 4

But could it be merely an effect of extraneous visual differences?

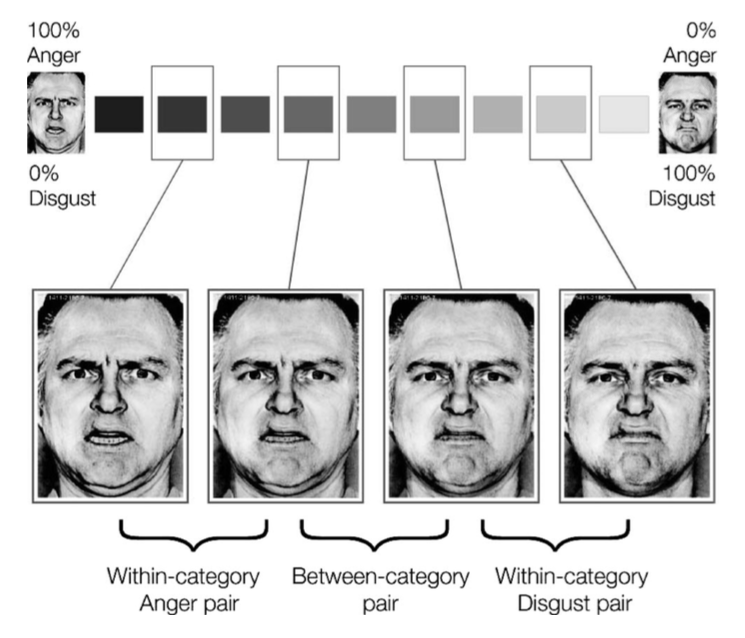

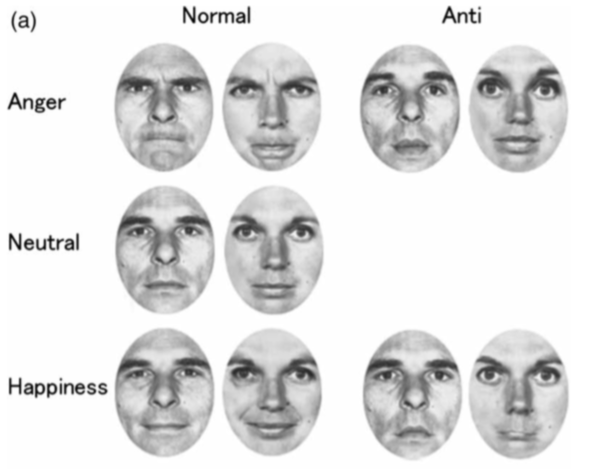

Sato and Yoshikawa, 2009 figure 1A

‘we reversed the direction of the facial features of the emotional expressions but retained the general configuration. For example, if the angry expression had V-shaped eyebrows and the neutral expression had horizontal eyebrows, our computer manipulation generated faces with eyebrows shaped in an upside-down V shape.’

Sato and Yoshikawa, 2009 p. 371

Sato and Yoshikawa, 2009 figure 1B

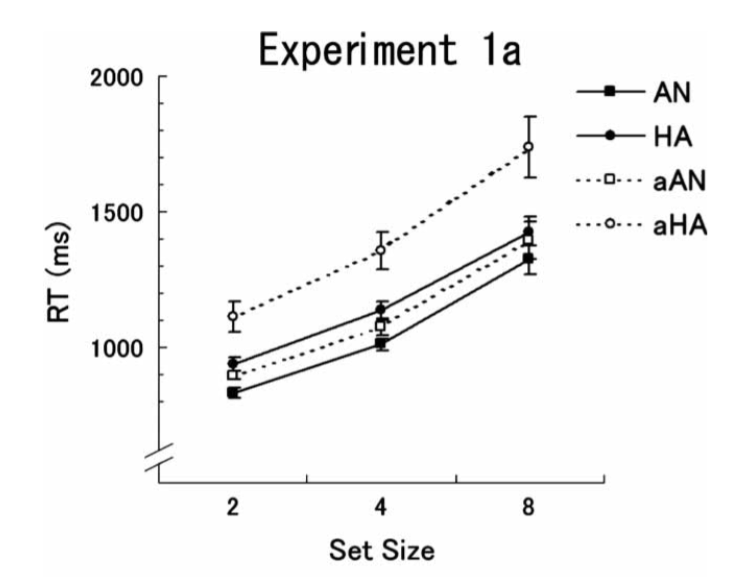

Sato and Yoshikawa, 2009, figure 2A

‘the normal angry and happy expressions were detected faster than were the respective anti- expressions.’

‘detection of an emotional expression was superior even when the effects of stimulus visual characteristics were controlled.’

Or ...

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

challenge

Evidence? Categorical Perception!

1. The objects of categorical perception, ‘expressions of emotion’, are facial configurations.

2. Facial configurations are not emotions.

so ...

3. The things we perceive in virtue of categorical perception are not emotions.

Aviezer’s Puzzle about Categorical Perception

What are the perceptual processes supposed to categorise?

Aviezer et al (2012, figure 2A3)

Aviezer et al’s puzzle

Given that facial configurations are not diagnostic of emotion, why are they categorised by perceptual processes?

‘[A]lthough the faces are inherently ambiguous, viewers experience illusory affect and erroneously report perceiving diagnostic affective valence in the face'

Aviezer et al (2012, 1228)

... maybe they aren’t.

1. The objects of categorical perception, ‘expressions of emotion’, are facial configurations.

2. Facial configurations are not emotions.

so ...

3. The things we perceive in virtue of categorical perception are not emotions.

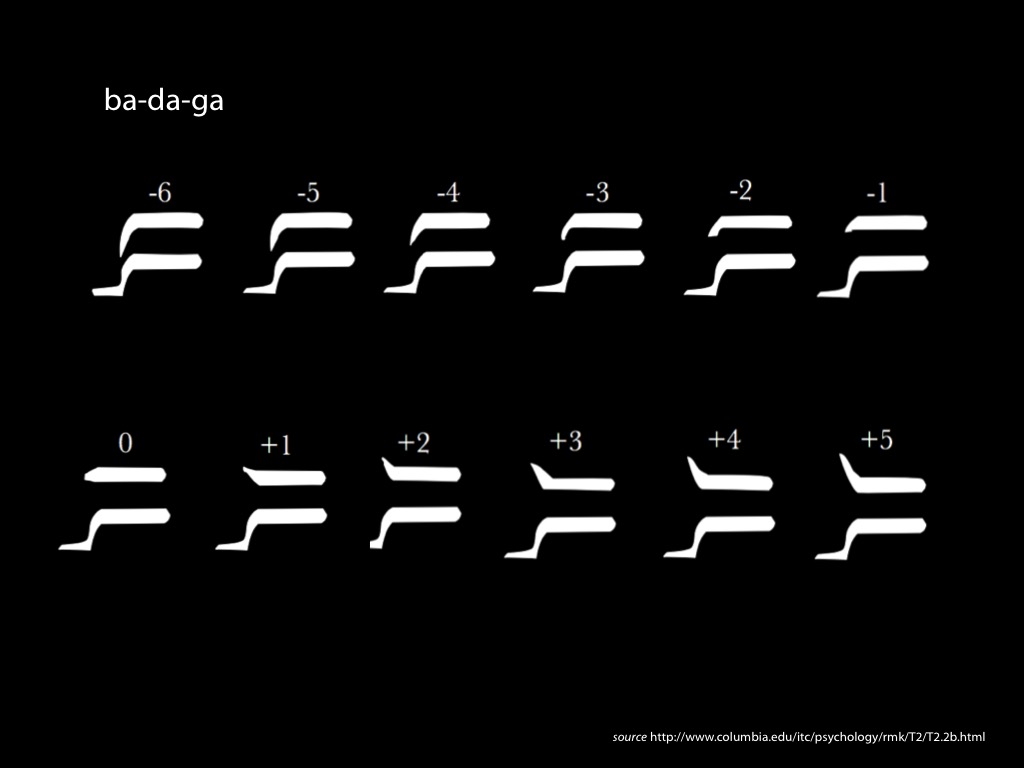

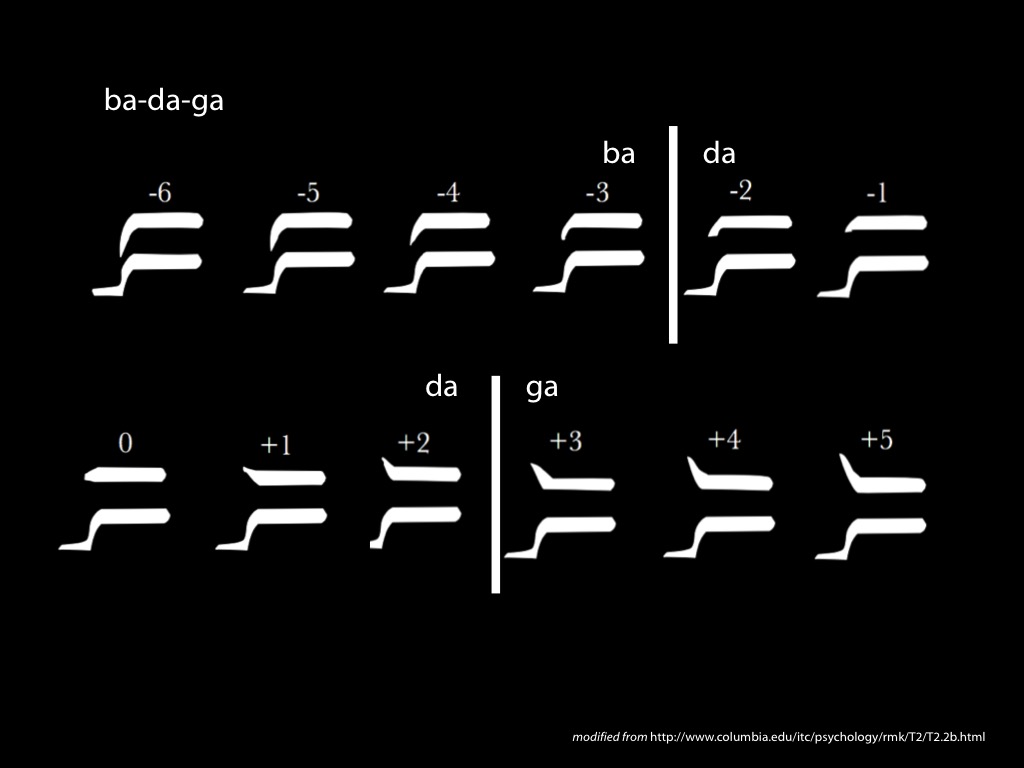

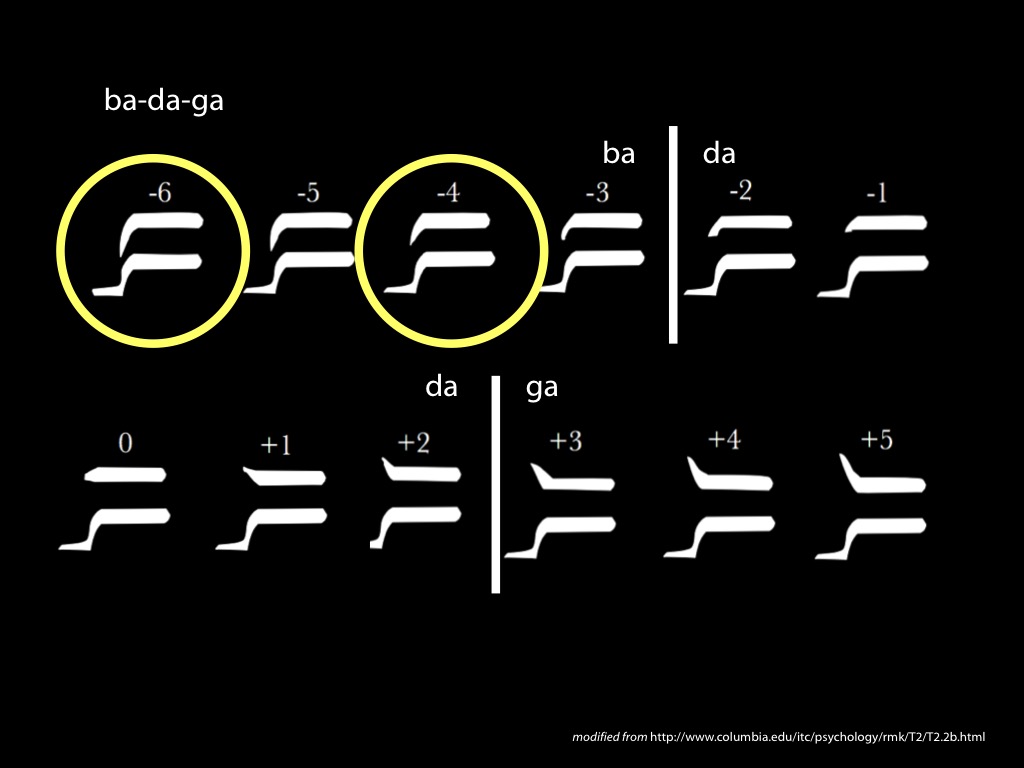

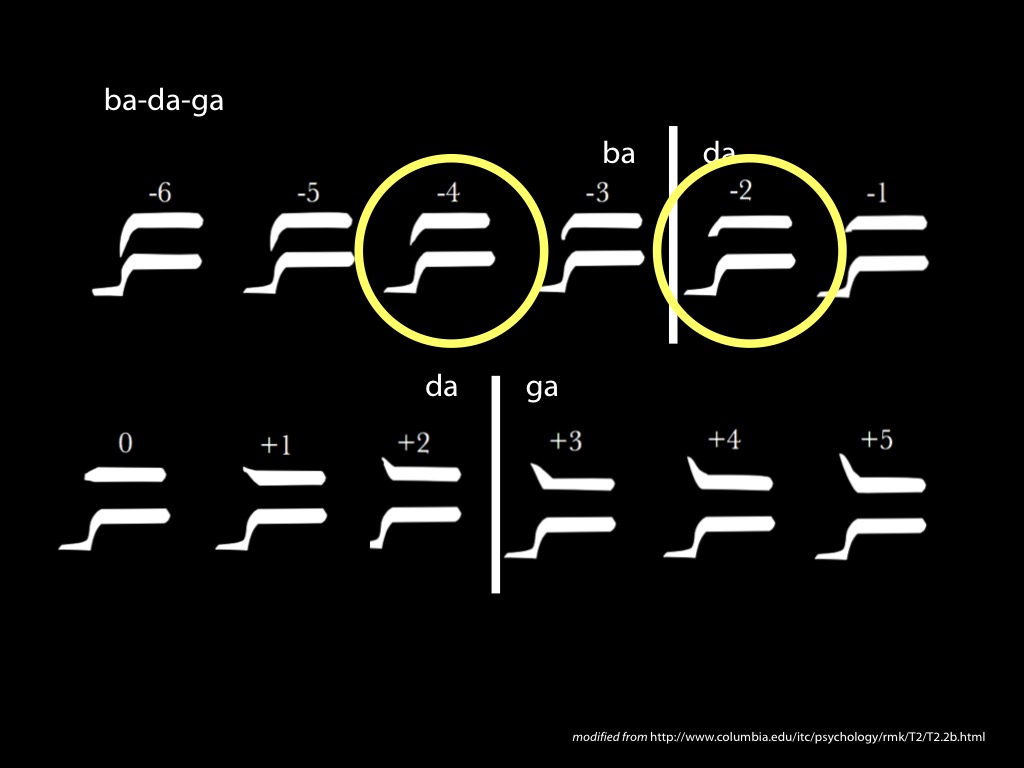

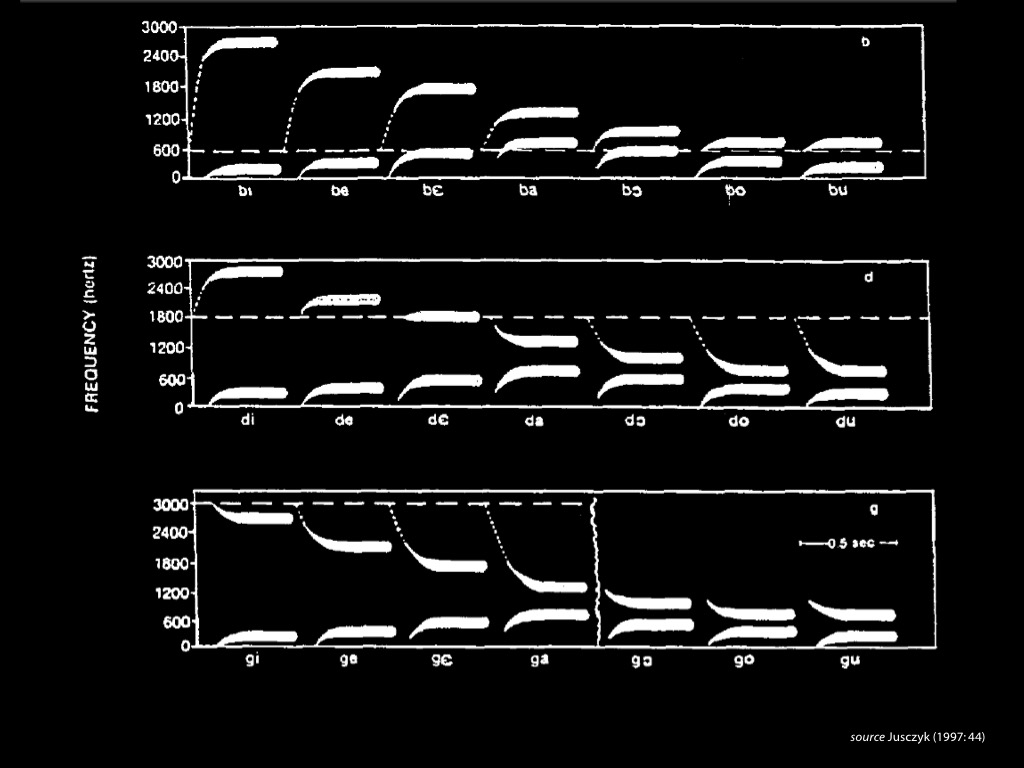

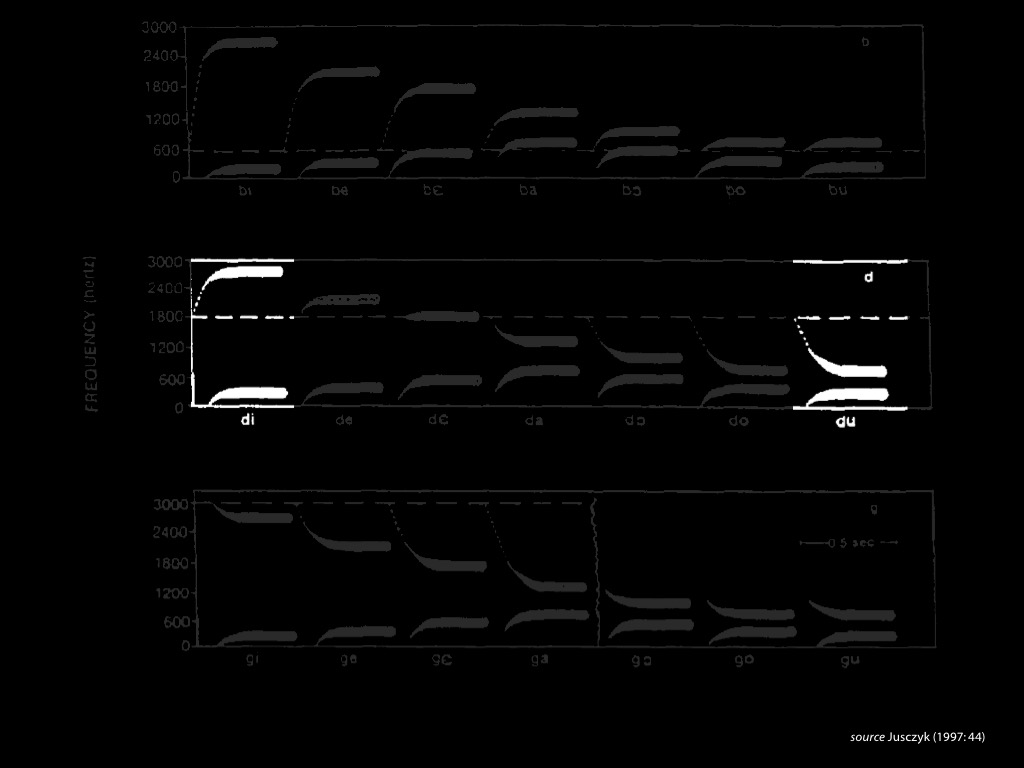

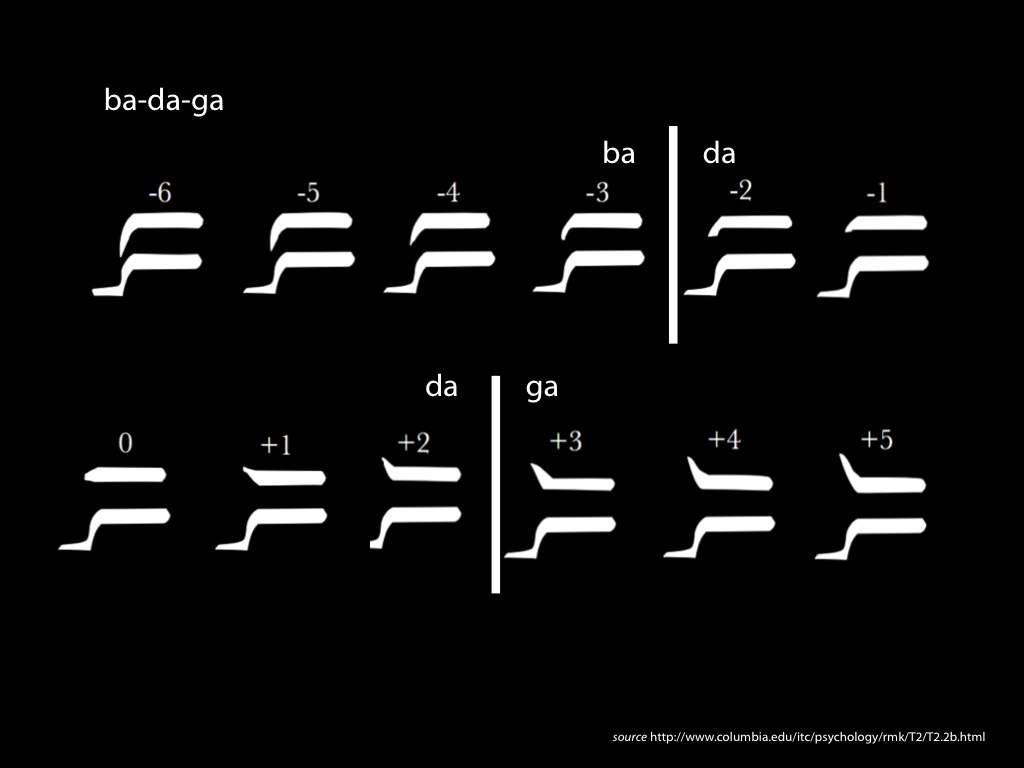

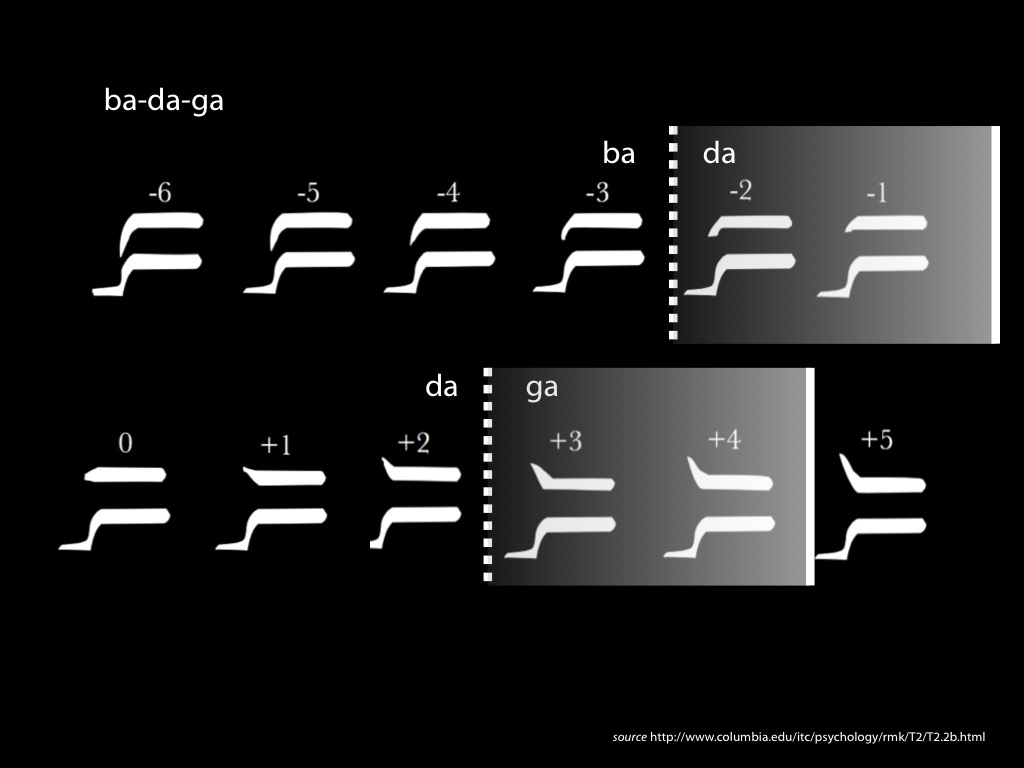

Categorical Perception of Speech

What determines where the category boundaries fall?

(1) There are category boundaries … .

(2) … which correspond to phonic gestures.

(3) Facts (1) and (2) stand in need of explanation.

(4) The best explanation of (1) and (2) involves the claim that the objects of speech perception are phonic gestures.

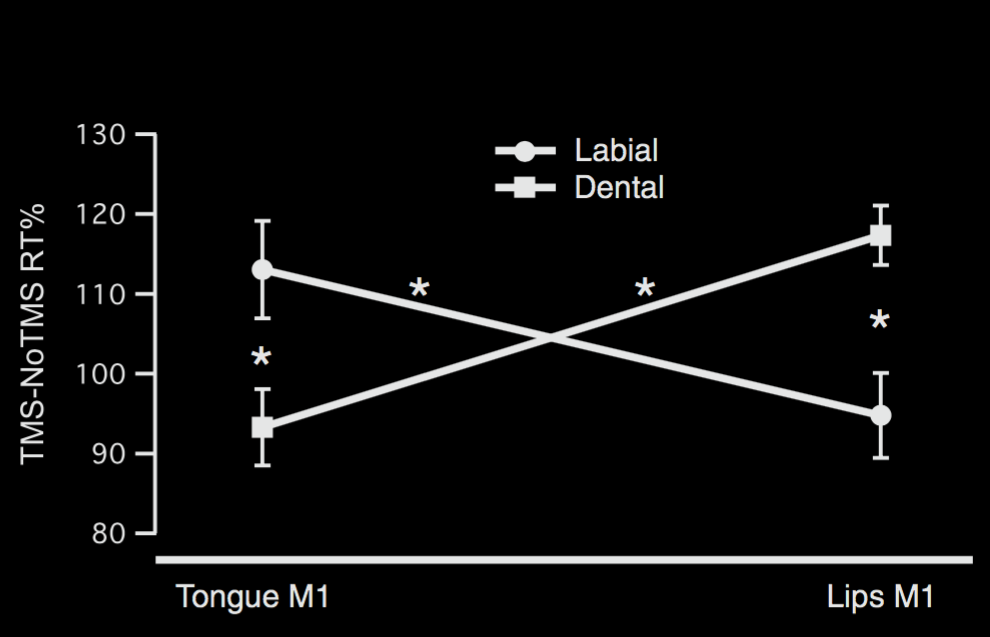

‘word listening produces a phoneme specific activation of speech motor centres’ \citep{Fadiga:2002kl}

‘Phonemes that require in production a strong activation of tongue muscles, automatically produce, when heard, an activation of the listener's motor centres controlling tongue muscles.’ \citep{Fadiga:2002kl}

‘word listening produces a phoneme specific activation of speech motor centres’

‘Phonemes that require in production a strong activation of tongue muscles, automatically produce, when heard, an activation of the listener's motor centres controlling tongue muscles.’

Fadiga et al (2002)

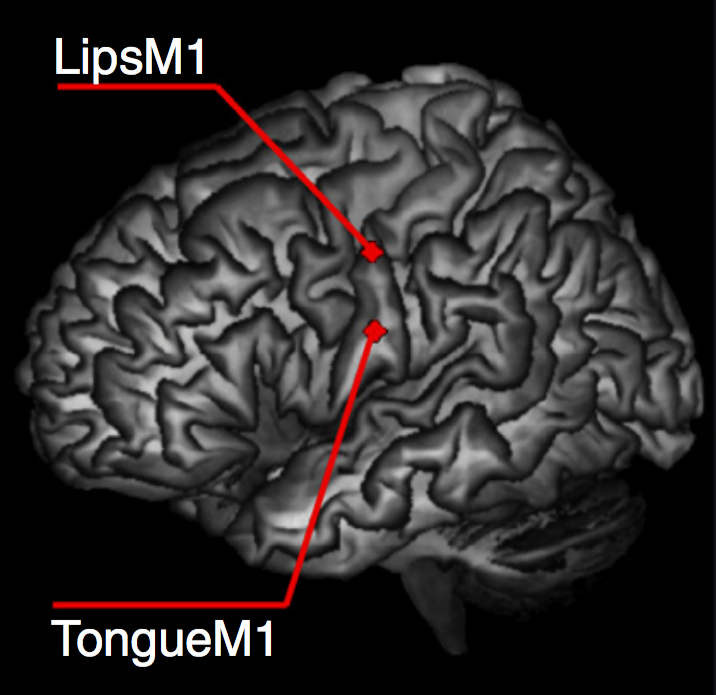

D'Ausilio et al (2009, figure 1)

D'Ausilio et al (2009, figure 1)

(1) There are category boundaries … .

(2) … which correspond to phonic gestures.

(3) Facts (1) and (2) stand in need of explanation.

(4) The best explanation of (1) and (2) involves the claim that the objects of speech perception are phonic gestures.

The Objects of Categorical Perception

phonic gesture

expression of emotion

- isolated acoustic signals not diagnostic

- isolated facial expressions not diagnostic

- communicative function

- communicative function ???

cultural variation

is partially explained by historical heterogeneity

and perhaps driven by communicative needs

phonic gesture

expression of emotion

- isolated acoustic signals not diagnostic

- isolated facial expressions not diagnostic

- communicative function

- communicative function ???

- complex coordinated, goal-directed movements

- complex coordinated, goal-directed movements

another comparison

categorical perception of speech

categorical perception of expressions of emotion

the objects of categorical perception are not acoustic signals,

the objects of categorical perception are not facial configurations

they are actions directed to the goal of performing a particular phonic gesture

they are actions directed to the goal of expressing a particular emotion

Aviezer et al’s puzzle

Given that facial configurations are not diagnostic of emotion, why are they categorised by perceptual processes?

Reply:

Facial configurations are not what perceptual processes are supposed to categorize,

instead they are supposed to categorize

actions

directed to the goal

of expressing a particular emotion.

1. The objects of categorical perception, ‘expressions of emotion’, are facial configurations.

2. Facial configurations are not emotions.

so ...

3. The things we perceive in virtue of categorical perception are not emotions.

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

challenge 2

Evidence? Categorical Perception!

Which model of the emotions?

How could the objects of categorical perception be actions?

What are the perceptual processes supposed to categorise?

Actions whose goals are to express certain emotions.

- The perceptual processes categorise events (not e.g. facial configurations).

- These events are not mere physiological reactions.

- These events are are perceptually categorised by the outcomes to which they are directed.

?

How could the objects of categorical perception be actions directed to the goals of expressing particular emotions?

disgust: first-person experience vs third-person observation

-- common activation

-- common impairment

Wood et al’s Sensorimotor Theory*

1. Observer covertly recreates expression

2. Recreation activates associated emotion-related processes

‘[S]imulating another's facial expression can fully or partially activate the associated emotion system in the brain of the perceiver.’

3. This activation ‘is the basis from which accurate facial expression recognition is achieved’

-- TMS to motor and somatosensory areas slows recognition of changes in facial expressions

-- inhibiting facemasks reduce accuracy in a facial expression discrimination task

Wood et al (2016, p. 229, 231)

✓

How could the objects of categorical perception be actions directed to the goals of expressing particular emotions?

conclusion

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

challenge

Evidence? Categorical Perception!

1. The objects of categorical perception, ‘expressions of emotion’, are facial configurations.

2. Facial configurations are not emotions.

so ...

3. The things we perceive in virtue of categorical perception are not emotions.

‘We sometimes see aspects of each others’ mental lives, and thereby come to have non-inferential knowledge of them.’

McNeill (2012, p. 573)

challenge 2

Evidence? Categorical Perception!

Which model of the emotions?